Agentic That "Almost Works" Doesn't Work

- Hub Insights

- 🤖 AI

- Adaptive Agentic Systems

- Agentic That "Almost Works" Doesn't Work

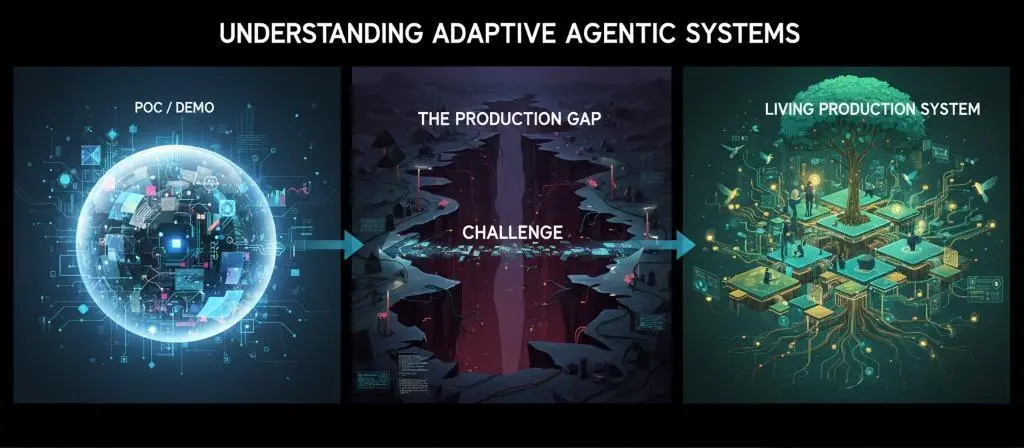

Understanding Adaptive Agentic Systems

Agentic systems are everywhere. We talk about them, build them, sell them. But between impressive demos and systems that hold up in production, there's a chasm that few manage to cross.

This three-part series explores this chasm and how to bridge it.

Part 1 - Agentic That "Almost Works" Doesn't Work

We lay the conceptual foundations. Why current agentic systems struggle to move from POC to production, what systematic pitfalls exist, and what principles to build on to avoid them. The problem isn't that they crash—it's that they work "sort of," and to reach production, they don't achieve the required level of reliability. More importantly, we don't know how to improve them because we haven't equipped the system for that from the start.

Part 2 - Adaptive Agentic: The Principles of AI That Grows

We move to the vision. The principles of adaptive agentic systems, how to build systems that don't just execute but observe, learn, and grow. We explore the architectural pillars that make this vision possible.

Part 3 - Putting Adaptive Agentic into Production, Illustrated with RAISE

→ Part 3a: Architecture, design and operationalization

→ Part 3b: Multi-agent orchestration and dynamic knowledge graph

We move to implementation. How these principles translate concretely into RAISE, the generative AI platform of the SFEIR group. What architectures, design choices, and mechanics to put in place. From concept to operational reality.

Because between being right on paper and running a system in production, there's a whole world. And it's precisely this world that we're going to explore.

In this first part of our series on agentic systems, we diagnose the problem: why current agentic systems struggle to move from POC to production, what systematic pitfalls doom them. The problem isn't that they crash—it's that they work "sort of," and to reach production, they don't achieve the required level of reliability. Most importantly, we don't know how to improve them because we haven't equipped the system for that from the start.

The Problem We Refuse to See

I've seen many demos over the past few months. Impressive architectures: 12 specialized agents, 47 conditional branches, graphs that take up the entire screen. They run perfectly (well, almost). At least, the agentic system appears to do what we ask.

And three months later, it's still the same. It works in the same cases, stumbles on the same limitations. The system has learned nothing.

Same observation for off-the-shelf solutions sold as autonomous agents. Autonomy is often very relative. The system may learn, but control of this learning remains with the designer, not the client. You can't adapt it to your context, evolve it according to your needs. It's autonomy under constraint.

And above all, it's autonomy that doesn't survive real-world conditions. The system is autonomous until it isn't...

"It's not the fall that kills you, it's the landing" - Hubert, La Haine

The system has learned nothing, or has learned in a way we can't control. It hasn't evolved as we would have wanted. It has simply executed, over and over, the same logic frozen in its architecture. It's beautiful to look at, but dead inside.

That's the real problem with agentic systems today: we build systems that act, but don't learn, or learn poorly. And that don't survive scaling up.

The Trap of the POC That Almost Works

So there's this trap, perhaps the most insidious of all in generative AI exploration: the deceptive ease of the POC.

Setting up an agentic system that almost works is incredibly easy today. In just a few hours, you have a demo that impresses. The agent responds, chains actions together, seems to understand.

You test 5-6 use cases: "Create a client entity for me," "Retrieve info for project X," "Generate a report on Y." It passes. The agent creates the entity, finds the project, produces the report. Everyone's happy.

And then you try to move to production.

That's where the dream crashes into reality.

A user asks: "Create a client for the Mercury project with the same info as the Apollo project client." The agent searches for Apollo, can't find it (it's called "Apollo 2024" in the database), asks for clarification. The user rephrases. The agent creates the client but forgets to copy the billing address because the workflow hadn't anticipated this clarification back-and-forth. Three exchanges for something that should have passed in one. And the worst part: the system didn't detect that it had already made this error with other users last week, didn't notify anyone, and certainly didn't self-correct.

Why does this specific use case, which you hadn't anticipated, crash the system? Why does this user get a completely off-target response when the question seems obvious? You switch models (GPT to Gemini, or vice versa) and suddenly everything behaves differently. Some things improve, others degrade. But which ones exactly?

You adjust a prompt. It seems better on the problematic case. But are you certain you haven't broken 10 other cases without realizing it?

The problem is that you have no visibility into what really matters.

Sure, you've implemented standard metrics from LLM monitoring solutions: general model performance (processing speed, resource usage), time to first token (the time it takes the model to start generating its first response, a key indicator of perceived responsiveness), thumbs up/down management on responses. But in the context of agentic systems, that's not enough. You don't have metrics on reasoning quality, no baseline on decisions made, no regression tests on interaction patterns, no system that alerts you when quality truly drops.

You iterate blindly, crossing your fingers that it holds. And it never holds for long.

The chasm between POC/DEMO and PRODUCTION: a challenge that trips up most agentic projects

There's a world (a chasm) between almost works and "production ready." And this chasm is that of observability and measurement. Without instrumentation, without systematic evaluation, without the ability to understand why the system does what it does, you're building on sand.

That's why most agentic POCs die before reaching production, or make their designers sweat once they've crossed the production threshold. Not because the technology doesn't work. But because we haven't built the conditions for reliability.

Making an LLM Act: "if/then" Kills Decision-Making Plasticity

As soon as we wanted to make a language model act (beyond simple conversational exchange), we had to give it tools, a framework for action, a way to interact with the information system. That's logical. An LLM alone can't do anything in the real world.

But something perverse happened.

We started modeling these interactions: defining steps, conditions, branches. We wanted to create workflows. And we brought these models (designed to reason) back into our classic algorithmic vision, that of if/then, loops, states, and conditions.

Basically, we treated them like "intelligent" APIs.

The result? Systems that look like diagrams on steroids.

They're complex, they look sophisticated, but they've lost what made generative AI powerful, beyond the ability to generate text: decision-making plasticity.

That ability to adapt, to improvise, to find solutions we hadn't programmed.

Or to put it another way:

We killed adaptivity in the name of predictability.

Or in data scientist terms: we sacrificed the stochastic value of LLMs on the altar of procedural determinism.

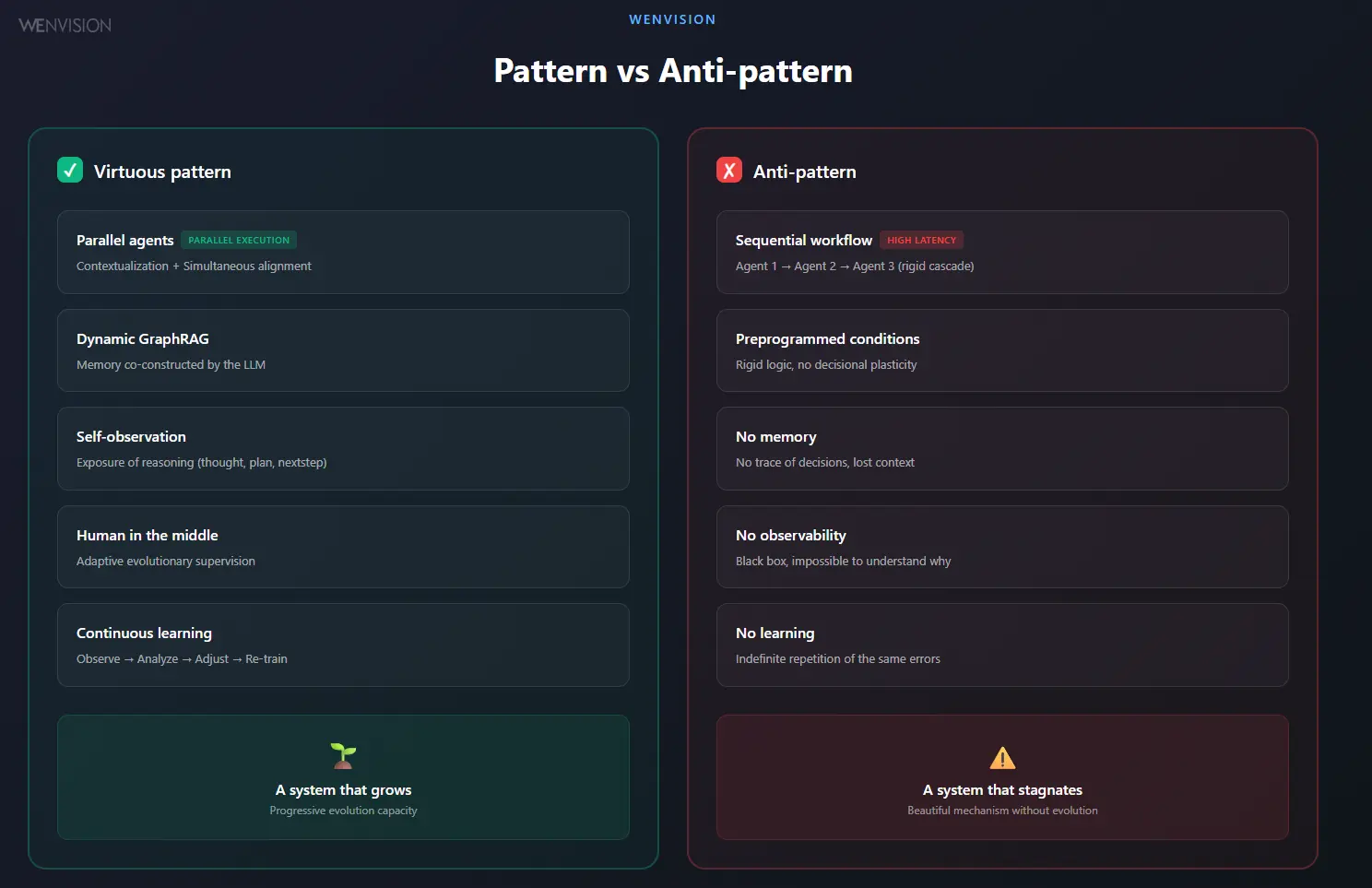

Pattern vs Anti-pattern: Where Are Your Feedback Loops?

Agentic systems open up a fascinating field of experimentation. But it's also a minefield.

The virtuous pattern is a system that learns from itself. Where agents share a goal, correct each other, mutually enrich one another. Where humans remain in the loop, not to control everything, but to guide, arbitrate, give meaning.

The anti-pattern, on the other hand, often hides behind impressive architectures. Dozens of agents connected by logical branches. It runs, it executes, it looks alive.

But there's no memory, no learning, no clear supervision loop. The system repeats itself. It doesn't progress. And the more we complexify it, the more we make its functioning opaque and its quality difficult to measure.

Virtuous pattern (system that grows) vs Anti-pattern (beautiful mechanics without evolution)

I've seen systems that ran in closed loops, with no human in the circuit. We sold them as "autonomous." In reality, they were illusions: the system learned nothing, it replayed the same score indefinitely. Like a student left to themselves, without feedback, without correction, without direction.

Let's be clear: immediate autonomy is a mirage.

Truly autonomous agents don't exist yet. Not because the technology isn't there, but because we skipped the crucial step: evolutionary supervised learning. A system can only improve if it's observed, evaluated, and guided, and gains autonomy in harmony with its users.

Conclusion: We Need Fundamental Principles

We've made the diagnosis. Current agentic systems suffer from four systemic ailments:

- The impressive POC syndrome: demos that work on 5 cases but collapse on the 6th we hadn't anticipated.

- Loss of plasticity: rigid architectures that killed the cognitive plasticity of generative AI in the name of predictability.

- Absence of observability: we iterate blindly, without understanding why it passes or why it breaks.

- The mirage of immediate autonomy: systems sold as autonomous that learn nothing.

To bridge the chasm between POC and production, it's not enough to add more agents, more conditions, more complexity. We need to rethink the foundations.

We need architectural principles that allow a system to learn, adapt, and grow.

That's what we detail in part two: the principles of adaptive agentic systems.

Continue Your Exploration

Discover other articles in the Adaptive Agentic Systems cluster within the AI universe