Adaptive Agentic - Principles of an AI That Grows

- Hub Insights

- 🤖 AI

- Adaptive Agentic Systems

- Adaptive Agentic: Principles of Growing AI

Understanding Adaptive Agentic Systems

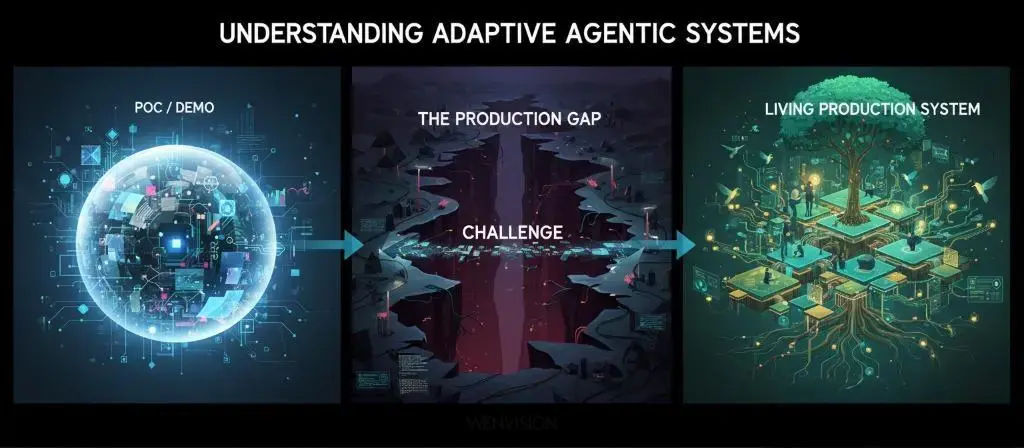

Agentic AI is everywhere. We talk about it, we build it, we sell it. But between impressive demos and systems that hold up in production, there's a gap that few cross.

This three-part series explores this gap, and how to bridge it.

Part 1 - Agentic AI That "Almost Works" Doesn't Work

We lay the conceptual foundations. Why current agentic systems struggle to move from POC to production, what the systematic pitfalls are, and what principles to build on to avoid them. The problem isn't that it crashes, it's that it works "somewhat," and to reach the production stage, it doesn't achieve the desired level of reliability. And above all, we don't know how to improve it because we didn't equip the system for that from the start.

Part 2 - Adaptive Agentic: Principles of an AI That Grows

We move to the vision. The principles of adaptive agentic systems, how to build systems that don't just execute but observe, learn, and grow. We explore the architectural pillars that make this vision possible.

Part 3 - Putting Adaptive Agentic into Production, Illustrated with RAISE

→ Part 3a: Architecture, design and operationalization

→ Part 3b: Multi-agent orchestration and dynamic knowledge graph

We move to implementation. How these principles translate concretely into RAISE, the SFEIR Group's generative AI platform. What architectures, what design choices, what mechanics to put in place. From concept to operational reality.

Because between being right on paper and running a system in production, there's a whole world. And it's precisely that world we're going to explore.

Agentic AI is everywhere. We talk about it, we build it, we sell it. But between impressive demos and systems that hold up in production, there's a gap that few cross.

In the first part, we made the diagnosis: current agentic systems rarely move from POC to production, due to lack of reliability, observability, and learning capacity.

In this second part, we explore the alternative vision: adaptive agentic. We present the architectural principles that enable building systems that don't just execute, but observe, learn, evolve. Systems that grow with you.

An IS That Dialogues, Not Commands

Before diving into technical details, let's establish the overall vision.

At WEnvision, we advocate a clear vision: the information system of the future won't be a stack of applications and interfaces. It will be conversational.

Not just in the sense of "talking to a chatbot." Conversational in the deep sense: a space where human and artificial personas continuously dialogue to achieve a common goal. A system that negotiates, clarifies, adjusts.

The conversational IS is the arrival of natural language in all interactions: Human → IS, Human → AI, and AI → AI. Agents collaborating with each other in natural language, processes adjusting through dialogue, models negotiating their decisions.

The interface, the button, the form, the screen, won't disappear. But they'll cease to be the condition for action. They'll become the support for an exchange. It's no longer the user adapting to the system; it's the system adapting to the user.

And that changes everything.

Because a conversational system isn't just an assistant. It's a cognitive partner. It doesn't just execute a task. It helps define it, clarify it, design its process. It reasons, it justifies, it learns.

That's the real transformation. Not a chatbot that responds politely. But a system that co-constructs with you.

This vision isn't a distant horizon. It's the structural framework within which all the principles we're about to detail now fit.

For an information system to become truly conversational, to be able to negotiate, adjust, co-construct, it needs specific foundations:

- The ability to self-observe to understand its own reasoning

- A cognitive base that structures the context (user, mission, information)

- A living organizational memory that makes knowledge accessible and structured

- An architecture that favors cooperation over mechanical execution

- Supervised learning that helps the system grow

Let's now explore these foundations, one by one.

Self-Observability and Intelligent Quality Supervision

For a system to learn, it must first be able to see how it reasons. Not just what it responds, but why it responds that way, what assumptions it makes, which areas make it uncertain.

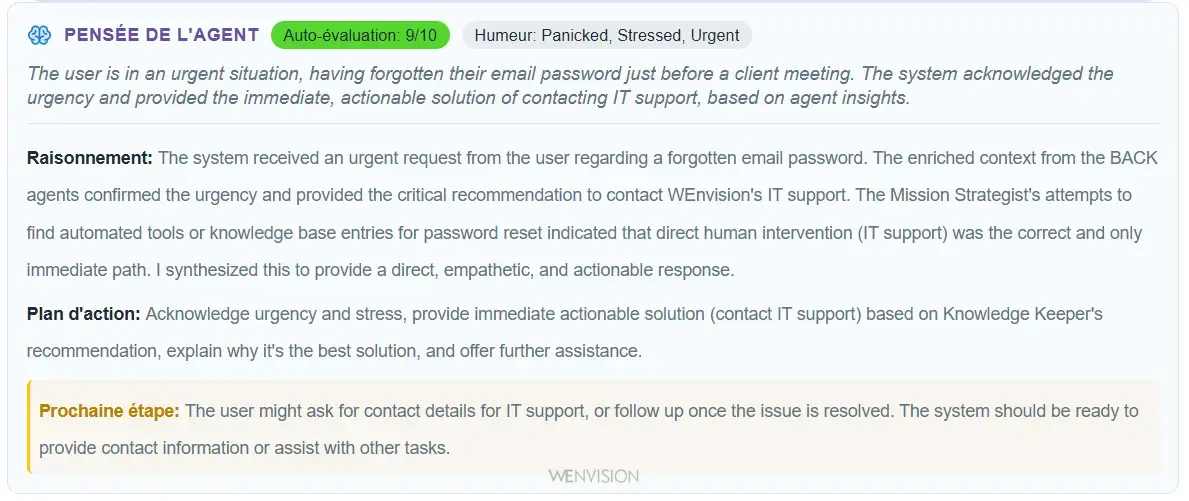

Example of self-observability: the system exposes its thinking, reasoning, and action plan

This is the first principle, and perhaps the most crucial: self-observability.

Why observability is the condition for everything

Without observability, you're blind. You don't know if your prompt works well, you don't detect degradations, you don't understand why one case passes and another fails. You're dependent on what you can guess from the AI's response.

You iterate blindly, crossing your fingers.

A concrete lived example

In an exploratory phase, we had an LLM that responded normally to users. Nothing unusual on the surface. But by analyzing the observation traces, we noticed that the model wrote in its internal reasoning: "the user asks me twice...".

Digging deeper, we quickly discovered a bug in our LangGraph implementation that was sending user inputs twice to the LLM. Without self-observability, this problem would have remained totally transparent to us, creating unnecessary token consumption and possible confusion in the model's reasoning.

The system itself revealed its own malfunction immediately.

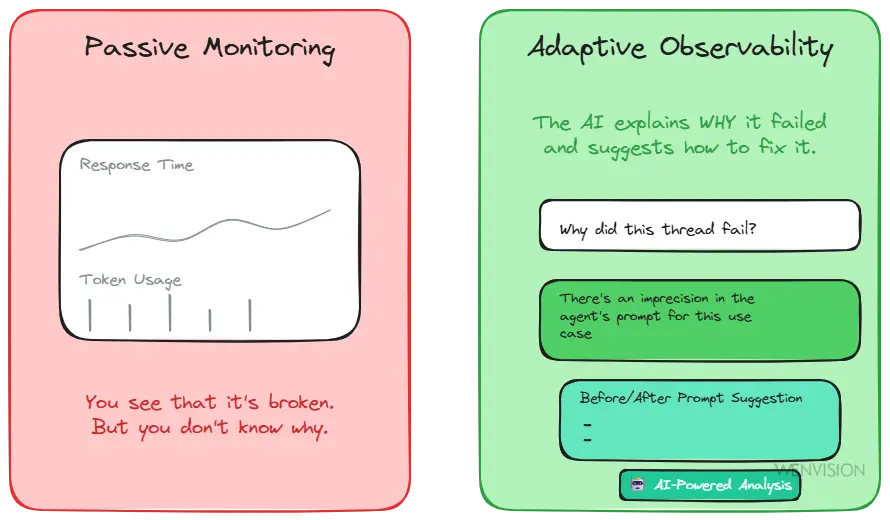

Intelligent quality supervision: beyond dashboards

But self-observability alone isn't enough. You also need a system that evaluates quality, detects degradations, identifies problematic patterns.

Rather than guessing whether a prompt change improves or degrades the system, we measure. We use a dedicated agent that analyzes conversations, identifies recurring problems, and recommends precise improvements. Not "the system works less well," but "the agent asks too many clarifications because the prompt lacks guidance on when to act."

Passive monitoring (metrics without context) vs Conversational analysis (dialogue with the quality agent)

Conversational AI-driven quality management. You don't just consult a dashboard with metrics. You dialogue with the quality agent:

- "Why did this thread go wrong?"

- "What improvements do you suggest for this type of case?"

- "Compare the two prompt versions"

The system evaluates the quality of its own outputs, detects inconsistencies, signals areas of low confidence. It can even suggest prompt modifications, with a before/after and an impact estimate.

Without this ability to self-observe and self-evaluate, no learning is possible.

The Three Pillars of a Learning System

An adaptive system isn't written like a program. It's trained like an organism.

And for that, it rests on three foundations that constitute its cognitive base:

1. User context

Understanding who's acting: intention, constraints, role, expected level of autonomy. Not just a username in a database, but a real understanding of what the person is trying to accomplish.

Why is this essential? Because the same action ("create a client") doesn't have the same meaning depending on whether it comes from a salesperson prospecting, a project manager assembling a team, or an accountant regularizing billing. Without user context, the system responds generically, where it should adapt to the real need.

2. Mission context

Defining why we're acting. Not just the list of steps to follow, but the meaning of the result.

It's not the same thing to "reset a password because the user forgot it" and to "reset a password because it appears in a leak." Similarly, "changing a client's address because there was an entry error" doesn't require the same actions as "changing a client's address because they moved."

Why is this essential? Because understanding the intention behind the action allows the system to make the right decisions when it encounters an unforeseen situation.

If the password appears in a leak, the system must force immediate change, check recent connections, alert security. If the user simply forgot it, following the standard process is sufficient.

If a client moved, you need to update the billing address, the delivery address, potentially change commercial subsidiary. If it's an entry error correction, just correct the address without touching the rest.

Without mission context, the system mechanically applies rules, where it should exercise judgment.

3. Information modeling

Making knowledge accessible, linked, interpretable. If your data is buried in silos, the LLM will search. If it has to search, it will slow down. If it slows down, the user will abandon.

A well-structured knowledge graph allows the model to reason rather than search. It's like the difference between memorizing by heart and understanding the links between concepts.

These three layers (user, mission, information) constitute the system's cognitive base. They allow the LLM to connect actions to intention, adjust its strategy, create coherent plans without them having been programmed.

But how to structure and maintain this cognitive base? That's where dynamic GraphRAG comes in.

Cognitive Memory: Dynamic GraphRAG

A learning system needs memory. Not just a database, but a knowledge structure that models the organization, processes, relationships. A memory that can be queried, enriched, understood by the LLM.

That's the role of knowledge graphs.

The graph as cognitive foundation

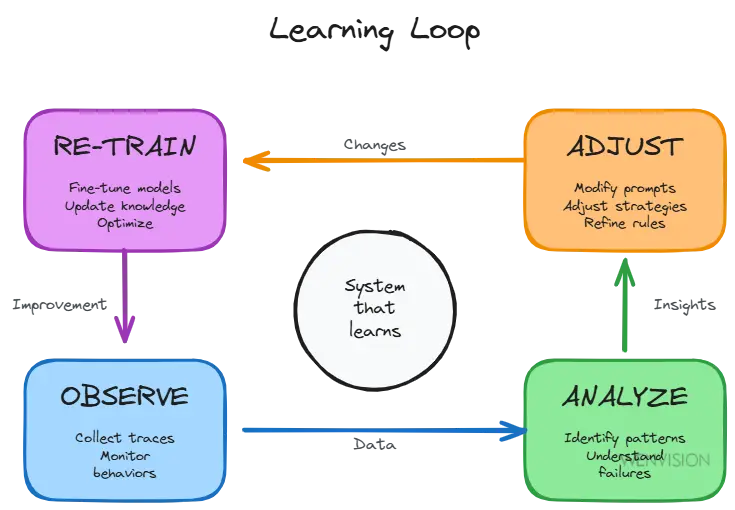

To be reliable, an agentic system must remain observable, measurable and perfectible. The graph structures the system's memory (entities, relationships, decisions) in a form that the LLM can understand, query and enrich.

The graph becomes the organizational memory:

- It links facts, actors and processes

- It makes decisions traceable

- It allows learning from past interactions

And around this base, a virtuous loop is established: Observe → Analyze → Adjust → Retrain

The continuous learning loop: observe, analyze, adjust, retrain

It's this loop that transforms an agent system into a learning system. Without it, you just have beautiful mechanics. With it, you have an evolving organism.

An example of the graph's power

Let's take a concrete case: an IT support agent handling assistance requests.

Thanks to the organizational graph that reproduced the company's org chart, the system can identify that 60% of reported problems come from the same team and concern the same type of malfunction (for example, recurring VPN access problems).

Rather than treating each ticket individually, the system can escalate the issue to the manager level: "12 people on your team are experiencing the same problem. There's probably a systemic cause to address."

Without the graph, each ticket is treated in silos. With the graph, the system sees organizational patterns and can propose solutions at the right level.

Dynamic co-construction

These graph bases cannot be frozen, built once and for all.

They must be co-constructed: the LLM decides what deserves to be structured, the human arbitrates and validates. Iteration after iteration, the graph becomes increasingly faithful to reality.

It's not a static schema. It's an organism that evolves with you.

The graph thus allows structuring and keeping alive the three pillars of the cognitive base (user context, mission, information) that we just detailed. But how does the system concretely use this structured memory to reason and act? That's where multi-agent architecture comes in.

Adaptive Multi-Agent Architecture

Now that we've established observability (how we see) and memory (what we know), let's explore the architecture: how the system reasons and acts.

Cooperation vs juxtaposition

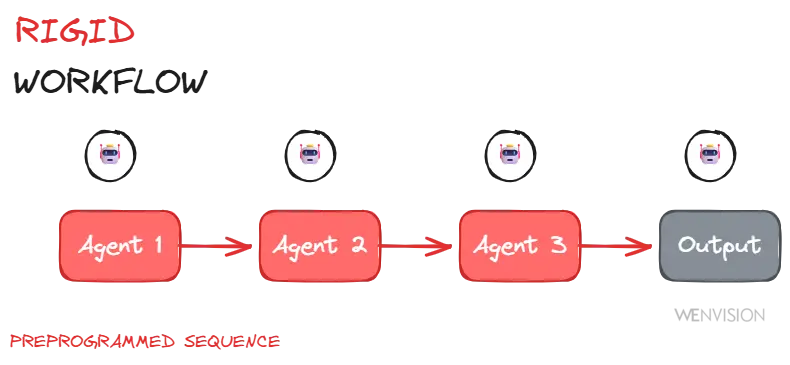

Most current architectures confuse multi-agent with multi-process.

We create multiple agents. Each has its task. They execute in parallel or in sequence. We connect them with conditions. And we call it "multi-agent."

But do these agents really collaborate? Do they share a common vision? Do they consult each other, correct each other, merge their reasoning?

Or are they just multiple "minds" that ignore each other?

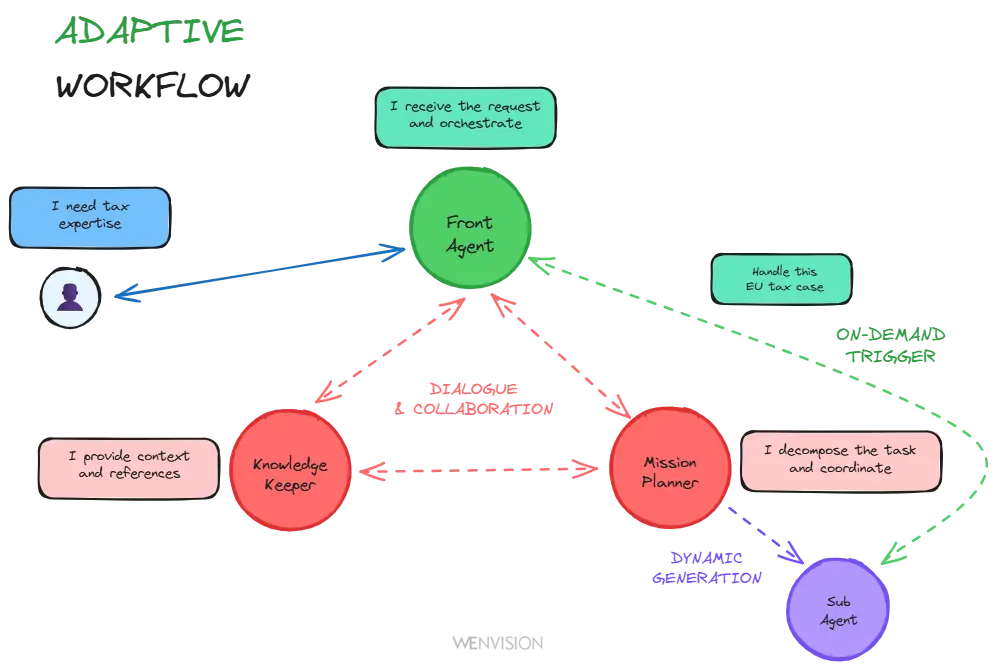

In an adaptive agentic system, agents aren't simply parallel. They're interdependent and contextual. One can solicit another. One can question another's reasoning. They can converge toward a collective decision that didn't exist in their individual logic, or solicit the human when necessary.

The goal is no longer coordination, but cognitive cooperation.

And sometimes, very importantly, a single well-designed agent (capable of playing multiple internal roles depending on context) is worth more than an army of isolated executors.

Parallel agents: reproducing human cognition

When you approach a complex problem, you naturally do three things simultaneously: you contextualize (who, what, where, how does it work here), you align with a mission (what's my role, what's expected of me, is there a process for this) and your experience (what have I done in a similar or close case, what are the past errors or successes related to this request).

These three dimensions aren't sequential. They're parallel.

A multi-agent architecture can reproduce this: specialized agents that analyze input in parallel (organizational context on one side, strategic alignment on the other), then a synthesis that produces a response that understands and knows, for an agent that responds frontally to the user.

It's not complicated for pleasure. It's an architecture that mimics our natural way of reasoning. And what we seek with agentic systems is precisely this: not to create a "magic API," a program, but a true intelligent collaborator.

Adaptive workflow: trigger on demand vs rigid orchestration

If your agents contextualize in parallel, you don't need to orchestrate everything in advance. The system can decide to call a sub-agent when it's relevant, not because it's wired into a workflow.

And these agents don't only work in API mode (input/output). They can have a real dialogue between them. An agent can interrogate another agent, clarify, negotiate, iterate. It's collaboration, not just data transmission.

Rigid workflow: sequential and predefined orchestration

Dynamic workflow: agents that dialogue and adapt based on context

Dynamic sub-agent generation

Even more powerful: the system can itself generate specialized sub-agents, adapted to the context and mission of the moment. Need to analyze a specific type of document? Need pointed expertise in a particular domain? The system creates the agent it needs, with the right prompt, the right tools, the right perimeter.

It's not chaos. It's guided adaptivity.

Agents establish the framework. The system decides the tactics. And the human supervises the trajectory.

Trajectory Toward Autonomy: Maturity Stages

Total autonomy is a horizon. Not an immediate stage.

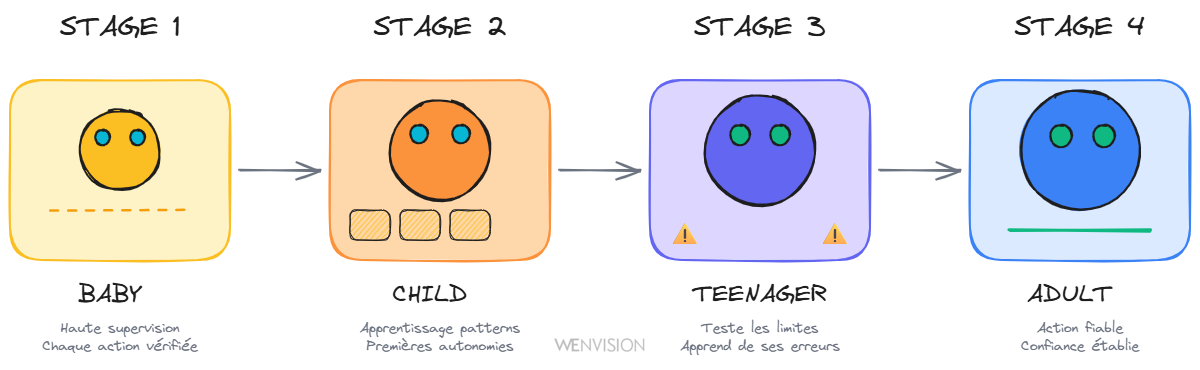

An agentic system goes through, like a living being, several maturity stages:

The 4 maturity stages: Baby (total supervision), Child (pattern learning), Teenager (testing limits), Adult (reliable action)

Conception

First of all, like for a child, you have to conceive it ;-)

And unlike a human child who can sometimes be conceived in the spontaneity of the moment, an agentic system is always conceived methodically. You must define its context, its role, its limits. And do this conversationally: the system must "learn to conceive itself" with the human, not be programmed in advance in stone. No immaculate conception here, but progressive co-construction.

The baby

It explores, but remains under high supervision. Every action is verified. That's normal.

The child

It learns to reproduce and generalize. It begins to understand patterns. We let it do little things by itself.

The teenager

It tests limits. It discovers responsibility. Sometimes it fails, and that's how it really learns.

The adult

It acts reliably, aware of its environment. We can trust it with important decisions (these opinions being only the author's 😁).

Today, most agentic systems are at the "child" stage.

And that's perfectly fine.

The mistake would be to force their independence, to claim they're already autonomous, to put them in production without supervision. What's needed is to build the trust, understanding and transparency that will enable that autonomy, when it's earned.

Human in the Middle: An Evolving Posture

This human supervision is what we call "human in the middle." But careful: it's not a fixed notion.

Evolution of the human role: from high supervision (Baby) to strategic oversight (Adult)

The evolution of the human's role

The human's role evolves with the system's maturity. At first, the "parent" is there to verify everything, every decision, every action. That's normal, the system is still learning and exploring. Then progressively, we delegate simple cases, we only supervise complex or ambiguous situations. We move from "verifying" to "arbitrating." Then from "arbitrating" to "auditing."

The goal isn't to keep the human prisoner of the system. It's to evolve their position as trust builds. From constant supervision toward exception management. From exception management toward strategic analysis.

And with observability, we're able to understand, justify, improve an agent's work. As we would for a human. No one has ever expected someone to be immediately 100% operational in their position on day one. We consider that an agentic system is closer in its integration into the company to a person than to a program.

Why human in the middle isn't optional: a lived example

During a demo, we had created an agent to respond to HR issues, with a fictitious company org chart as the knowledge base.

A user asks a question the agent doesn't know how to handle. And there, we observe in real time the system reasoning: it queries the organizational base, identifies people from the HR department, deduces the company's email address topology from that of our test user, notices it has an email sending tool at its disposal... and autonomously formulates a request to the fictitious HR person.

Fascinating in terms of demonstrated autonomy. Worrying if you realize that in production, this email would have actually been sent.

It's the perfect illustration of why "human in the middle" isn't an optional principle. The system's ability to reason and act autonomously is real and powerful. But without supervision, this autonomy can quickly exceed the limits we thought we had defined. The graph gives the system the means to act intelligently; the human must remain the safeguard that validates these actions are appropriate.

But more than that: the system must be allowed to learn and brought to the right maturity to ensure its actions are compliant. It's not just a question of punctual control, it's a question of progressive education.

A mature system isn't a system without humans

A mature system isn't a system without humans. It's a system where the human intervenes at the right level, at the right time, on the right decisions. Where their role is no longer to constantly correct, but to guide evolution, identify new edge cases, define what deserves to be learned.

Without this evolving vision of "human in the middle," we remain stuck in two equally sterile extremes: either we want to control everything (and the system never learns), or we let go too quickly (and the system spirals out of control).

The boundary between fashion and maturity is this:

"Hype" systems want to replace humans, sustainable systems seek to evolve human collaboration with the machine.

Adaptive agentic isn't about "letting the AI do it." It's about creating the conditions for it to learn to do it right, in a controlled, observable and co-evolutionary framework.

The Promise: A System That Grows

This approach (self-observability, dynamic GraphRAG, adaptive multi-agent architecture, evolving human in the middle) doesn't guarantee immediate autonomy.

It guarantees something more precious: the ability to evolve and long-term reliability.

A system that observes its own functioning, learns from its interactions, enriches its memory, adjusts its strategies. A system that grows with you, that becomes more relevant over time, that doesn't stagnate in its own logic.

A system that progressively moves from childhood to maturity, because we gave it the conditions to learn.

These principles aren't theoretical. They were designed to be implemented, tested, put into production.

That's what we'll explore in the third part: how these principles translate concretely into RAISE, the SFEIR Group's generative AI platform. From technical architecture to design choices, from co-construction mechanics to operational results.

Because between being right on paper and running a system in production, there's all the implementation. And that's where everything is decided.

Continue Your Exploration

Discover other articles in the Adaptive Agentic Systems cluster within the AI universe