Agentic AI in Production: Lessons from Two Years of Deployment

- Hub Insights

- 🤖 AI

- Adaptive Agentic Systems

- Agentic AI in Production: Lessons from Two Years of Deployment

How to anticipate the right challenges when nobody's talking about them yet

Early 2023: Betting on Agentic Before the Wave

It was early 2023. At a European fintech, we were exploring something that didn't have a name yet: making generative AI act, not just respond.

It was a particular time. Large language models (LLMs) had just emerged. The industry was discovering ChatGPT. Nobody was talking about AI agents or autonomous workflows yet. And us? We already wanted AI to manage our technical operations.

The problem? No solution existed for that.

So we did what pioneers do: we built our own. A system that allowed our AI agents to execute real operational actions in a banking context with its security and compliance constraints.

The Use Case: AI4Ops, Intelligent Automation of Level 1 IT Support

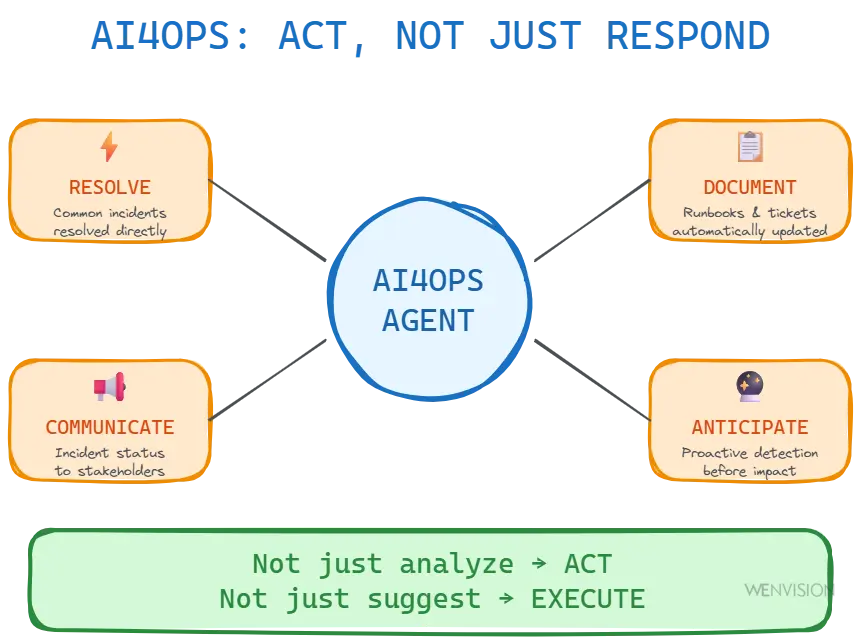

Our system covered four complementary axes:

AI4Ops: Resolve, Diagnose, Communicate, Anticipate - Not just analyze, act; not just suggest, execute

→ Automatic resolution of common incidents

Access issues (CRM, messaging, collaborative tools), rights assignment, account resets. The agent diagnoses, fixes directly when possible, or prepares escalation with full context.

→ Pre-diagnosis and qualification

Analysis of reported errors, identification of recurring patterns, initial technical qualification before transfer to specialized teams. No more incomplete tickets bouncing back and forth.

→ Incident communication

While an incident is ongoing, the agent formulates the situation status on demand: what's been done, who's mobilized, what's the progress. Experts stay focused on resolution without being interrupted for communication.

→ Proactive detection

Continuous infrastructure monitoring, anomaly identification before user impact, optimization recommendations based on history.

And many other aspects related to reducing operational overhead and improving operational quality: technical documentation generation, runbook creation, trend analysis...

Every action traced, every decision auditable. Autonomous workflows with human validation on sensitive operations. This was agentic before the term became mainstream.

Not just analyze. Act.

Not just suggest. Execute.

Not just converse. Orchestrate.

What You Learn Building for a Constrained Environment

And it worked. 100% of level 1 support automated with high quality, natively multilingual to serve all teams. Over 90% reduction in operational costs, all while maintaining strict compliance and auditability in a banking environment.

But success wasn't just technical. It was also a profound transformation in how employees integrate generative AI into their daily work. IT teams learning to supervise rather than execute, users naturally interacting with an agent for their issues, an organization evolving its processes to leverage intelligent automation.

And we quickly understood that this transformation wasn't unidirectional. It wasn't enough to change how humans work. We also had to anticipate that we'd need to change how the AI itself works to optimize this interaction. How does the agent learn from its mistakes? How does it improve over time? How does it better understand the organization's context?

These early insights led us to what we now call at WEnvision and SFEIR the vision of the conversational information system of the future: systems where natural interaction with AI becomes the preferred mode of accessing services, data, and processes. An infrastructure that doesn't just automate, but converses, understands, and grows with the organization.

The Essential Questions

But building agentic systems in production, in a demanding banking context, forces you to ask the right questions from the start.

You can't deploy an agent that acts in a bank without:

- ✅ Complete observability: every decision must be traceable, every reasoning auditable

- ✅ Secure action tools: the agent can't just "call APIs", it must operate within a validated framework

- ✅ Clear human position: who validates what, when, how? Autonomy can't be total from the start

- ✅ Auditable feedback loops: capture user feedback beyond simple thumbs up/down

These constraints, far from being obstacles, guided us toward the right architectural intuitions.

Analyzing our production metrics, we identified what worked: the system executed, traced, improved through feedback. But we also identified what was missing to truly scale: a formalized architecture that separates what's stable (the organization) from what moves fast (projects), transparency on how the agent reasons, extensibility without reconstruction.

We had built an agentic AI that executes reliably and auditabily. But we lacked a complete architectural vision to move to the next level.

2024: The Agentic Boom... and the Blind Spots

Then came 2024. Suddenly, agentic was everywhere. Frameworks flourished: LangGraph, CrewAI, AutoGen, and dozens of others. Workflow automation solutions multiplied. Impressive proofs of concept (POCs) too.

And observing what was emerging, something struck me.

The aspects we had identified as essential in 2023 weren't treated as foundational building blocks, but rather added as afterthoughts.

New frameworks focused on orchestration, execution, coordination. That's important, of course. But several critical dimensions remained secondary:

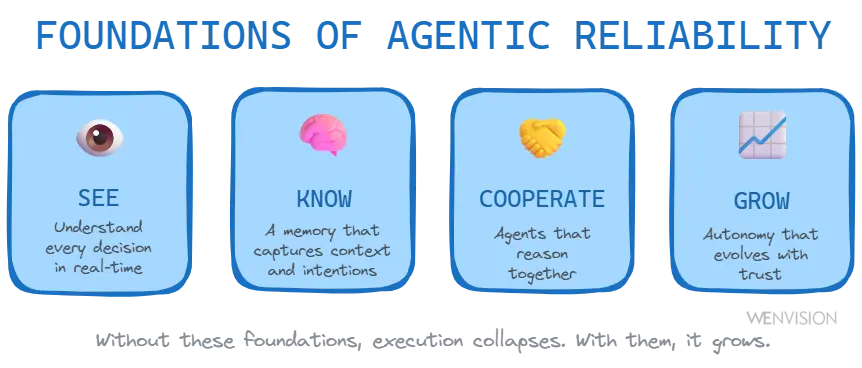

Foundations of a reliable agentic system: observability, structured memory, cognitive cooperation, and adaptive supervision

🔍 Reasoning Observability

Solutions offered logs, execution traces. But understanding why an agent makes a particular decision? Observing its uncertainty, hesitations, plan? Rarely thought through from the start.

🧠 Structured Organizational Memory

Intelligent search solutions exist. But truly structuring knowledge? Separating what's stable (organization, processes) from what evolves quickly (missions, projects)? Added later, if added.

🤝 Cognitive Cooperation Between Agents

Multi-agent workflows, sophisticated orchestrations. But agents that truly reason in parallel and synthesize their contributions? More like disguised sequential execution.

👤 Evolving Human Position

Binary validation (approve/reject). But a posture that evolves with system maturity? That grows progressively from constant supervision toward exception management? Not in the initial priorities.

These aspects weren't details. They were precisely what would allow a system to move from "works" to "grows".

When Industry Maturation Meets Field Experience

That's when I joined WEnvision, the SFEIR Group entity specialized in generative AI. And discovering RAISE, their agentic platform, and exchanging with their passionate teams, a unique opportunity presented itself.

The world had evolved since 2023. Solutions had matured, frameworks had multiplied, use cases had diversified. This ecosystem maturation finally allowed us enough perspective to formalize a complete vision.

Our 2023 intuitions — reasoning observability, memory structuring, cognitive cooperation, evolving human position — weren't just "best practices". They were the pillars of a coherent architectural vision.

That's how what we now call adaptive agentic was born.

The Four Pillars of Adaptive Agentic

The questions we asked ourselves in 2023 became formalized architectural principles:

How to build agents that evolve, not just execute?

→ Reasoning Transparency: understand why the agent decides, not just what it does

How to structure memory so it's alive, not frozen?

→ Intelligent Organizational Memory: separate what's stable (organization) from what evolves quickly (missions)

How to make agents truly cooperate?

→ Intelligently Collaborating Agents: parallel reasoning and contribution synthesis

How to grow autonomy with confidence?

→ Adaptive Supervision: control that evolves with system maturity

This vision doesn't come out of a hat. It's the result of the convergence between our 2023 early insights, the industry's 2024 maturation, and our work on RAISE that allows us to concretize it today.

Why This Article Series Now?

Because in 2025, hundreds of companies are launching into agentic. Thousands of demos impress. But many will hit the same blind spots we identified.

This series presents our complete journey: from diagnosis to principles, from principles to implementation.

Part 1 - The Diagnosis

Why agentic that "almost works" doesn't work. The four systematic traps that prevent systems from growing.

Part 2 - The Principles

Adaptive agentic: reasoning transparency, organizational memory, agent collaboration, adaptive supervision.

Part 3a - Implementation (Part 1)

How RAISE implements these principles in production. Architecture, design, and operationalization.

Part 3b - Implementation (Part 2)

Multi-agent orchestration, dynamic knowledge graphs, and closed-loop feedback mechanisms.

What You'll Discover

If you're a CEO, CTO, or responsible for an AI initiative in your organization, this series will probably save you months.

Because you might be:

- ✓ Evaluating agentic frameworks that seem complete but don't cover essential aspects

- ✓ Celebrating impressive demos while sensing something's missing for production

- ✓ Focusing on orchestration and execution without thinking about system evolution

- ✓ Looking for how to move from prototype to production without rebuilding everything

We've been there. We've identified the aspects that make the difference. We've formalized them. And we've built a platform that embodies them from the start.

What we present is neither theoretical nor speculative. It's the result of a maturation journey: from field intuition in 2023, to industry confirmation in 2024, to complete formalization with RAISE today.

Between these experiences lies a mature vision that others can adopt right now.

This series is that vision.

Continue Your Exploration

Discover other articles in the Adaptive Agentic Systems cluster within the AI universe